Data comparisons and the DBMS_COMPARISON package

When doing building and validating an Oracle GoldenGate implementation, understanding the data goes a bit deeper than only using row counts between the source and target databases. Doing validations is essential to having successful GoldenGate implementation and there are a few tools out that can be used to achieve this.

These products are:

- Oracle Veridata (Oracle GoldenGate Tool) – which is great, but a lot of customers do not spring for this options

- RedGate’s Data Compare for Oracle – is a good tool for smaller datasets, but struggles with larger data sets on comparisons. Customers choose this due to cost

- Oracle’s DBMS_COMPARISON Package – this is a great, down, and dirty way of getting comparisons within the Oracle database. Limited to the Oracle database though.

As I’ve been working on a large scale Oracle GoldenGate implementation for a customer, the need to validate data became apparently very quickly. The customer always relied on row counts as the measure of success; however, as Oracle GoldenGate was processing data, we quickly identified that the data was diverging leading to other data related issues down the line. Since the customer didn’t have Oracle Veridata and RedGate was taking upwards of three hours to compare, we needed a faster solution – DBMS_COMPARISON.

The DBMS_COMPARISON package is great tool within the Oracle Database. From looking at the documentation, you can create, compare, and recheck compares as needed. All of which is contained within the database where the comparison is ran from. For an added bonus, compares can be ran over dblinks as well. Making migration comparisons quick and simple.

Create Comparison

Before any compares can be ran, a comparison has to be created. This is done by using the DBMS_COMPARISON.CREATE_COMPARISON procedure. In this case, we are going to compare two local database (test environment after all).

BEGIN

DBMS_COMPARISON.CREATE_COMPARISON(

comparison_name => 'TSTUSR_COMPARE_1’,

schema_name => 'TSTUSR’,

object_name => 'RANDOM_LRG’,

dblink_name => null,

remote_schema_name => 'TSTUSR1’,

remote_object_name => 'RANDOM_LRG');

END;

/

In the create compare statement above, you see that we are creating a compare called “TSTUSR_COMPARE_1”. This name will be referenced in other parts of the comparison setup. Additionally, you see the sourch and target schema and table to compare. The dblink_name is null because we are comparing locally.

Run Comparison

With the comparison created, now it can be ran. To run a comparison simply run DBMS_COMPARISON.COMPARE procedure. That sounds a bit to simple though. In order to run it, you’ll have to wrap it in a PL/SQL block.

SET SERVEROUTPUT ON

DECLARE

vScanInfo DBMS_COMPARISON.comparison_type;

vScanResult BOOLEAN;

BEGIN

vScanResult := DBMS_COMPARISON.COMPARE(

comparison_name => 'TSTUSR_COMPARE_1’,

scan_info => vScanInfo,

perform_row_dif => TRUE);

IF NOT vScanResult THEN

DBMS_OUTPUT.put_line('scan_id =' || vScanInfo.scan_id);

ELSE

DBMS_OUTPUT.put_line('No diffs’);

END IF;

END;

/

Reviewing Comparison

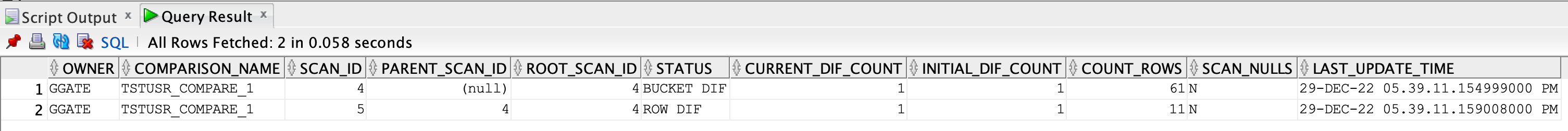

Notice that we set the server output on. This is so the procedure will return the scan id we need to identify the scan within the database by looking at the DBA_COMPARISON_SCAN view. A simple query like the one below will return all scans from the execution:

select * from dba_comparison_scan where scan_id = 4 or parent_scan_id = 4;

To find any differences between the tables, the DBA_COMPARISON_ROW_DIFF view can be used. Building on what was done above, the following query can be ran:

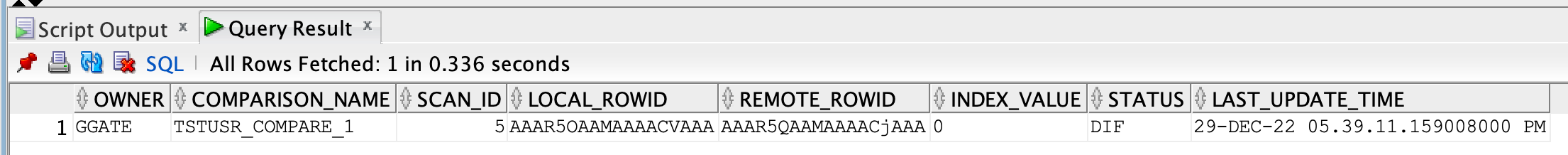

select * from DBA_COMPARISON_ROW_DIF where scan_id in (select scan_id from DBA_COMPARISON_SCAN where scan_id = 4 or parent_scan_id = 4);

This query shows that there is a difference between the tables (STATUS column).

In order to find out what is different between the tables, all you have to do is reference the rowid. LOCAL_ROWID is the source record. REMOTE_ROWID is the target record. A query similar to this can be used.

select * from tstusr.random_lrg where rowid = 'AAAR5OAAMAAAACVAAA'

union all

select * from tstusr1.random_lrg where rowid = 'AAAR5QAAMAAAACjAAA';

As you can see, the rows are different by one column (RANDOM_TXT).

At this point, you can repair the data (if needed) or let Oracle GoldenGate finish syncing and see if an update statement may have been processed.

This post is meant to help you get an understanding that row counts alone during a migration is not going to achive the definition of success. If row counts are 100%, that doesn’t mean the data is 100%. Always drive a bit deeper during migrations to ensure that everything is accounted for.

Enjoy!!

Very wonderful info can be found on blog.Raise blog range

Very interesting info!Perfect just what I was looking for!Expand blog